Just to show that it's not all angry rants around here ...

Continuing my mirror of the news items on my projects, I just updated socles to include jogamp 2.0 - fortunately the API was the same so it was just changing the included libraries in the build.

I still have a JOAL patch outstanding but just haven't been in the right mood to work on it for a while. Getting back to work last week was a bit of a shock to the system; but i'm slowly getting back into the groove and will eventually have time and energy left over for hacking.

I've been doing a bit of video stuff which is helping to harden and clean up jjmpeg a bit more, and I have a few minor patches pending for that. I've also been poking very tenatively at a slideshow creator/video compositor: but there's a lot of crap I don't really want to have to write (ugh, timeline anyone) so i'm not exactly making any headway yet.

Tuesday 28 February 2012

WTF Google?

I noticed google search becoming less and less useful of late: it can be quite hard to track down meaningful results, and I started to suspect I was getting my own private view of the internets ... (which is precisely the thing I do not want from a global internet search!!).

So with in that mind check these two results out, one from my signed in google account - with verbatim enabled, and one from another machine in which I'm not signed explicitly into google's world-encompassing spy network.

Standard results:

Verbatim results:

(I also checked the non-verbatim results on my logged in version, which are at least in this case thankfully the same as the standard result; although i'm sure i've seen differences in the past).

One might note that the verbatim result is the only one that has anything related directly to the search terms, particularly for the most important keyword of high specificity - there is in fact no relevant result AT ALL on the first page of the non-verbatim search (as suggested by the title and/or preview content). I really don't think a generic '[Archive]' header applied to old mailing list views should rank highly.

The new search algorithm is quite good at finding home pages for projects and products; but for specific information it's starting to suck major arse.

At least verbatim taught me this isn't quite the search term i'm after anyway.

So with in that mind check these two results out, one from my signed in google account - with verbatim enabled, and one from another machine in which I'm not signed explicitly into google's world-encompassing spy network.

Standard results:

Verbatim results:

(I also checked the non-verbatim results on my logged in version, which are at least in this case thankfully the same as the standard result; although i'm sure i've seen differences in the past).

One might note that the verbatim result is the only one that has anything related directly to the search terms, particularly for the most important keyword of high specificity - there is in fact no relevant result AT ALL on the first page of the non-verbatim search (as suggested by the title and/or preview content). I really don't think a generic '[Archive]' header applied to old mailing list views should rank highly.

The new search algorithm is quite good at finding home pages for projects and products; but for specific information it's starting to suck major arse.

At least verbatim taught me this isn't quite the search term i'm after anyway.

Saturday 25 February 2012

Cantarell Sucks

Why any designer with any mote of sense would choose Cantarell as a font is beyond me, let alone a system-wide default one. I know it was trendy - nearly 30 fucking years ago - to create a distinctively unique system font; but that was mostly about cost anyway and now there are plenty of decent free fonts available using a number of common formats.

When I re-installed my workstation with a minimal fedora 15, Cantarell was about the only font that came along for the ride by the time I had XFCE up. Which made for a particularly unpleasant experience in Terminal and emacs - until I installed xterm and 'fixed'. Apart from being a disastrously ugly and unreadable font; it decided to use the proportional one as well; so it didn't even work.

Apart from many of the letter forms being simply ugly and out of balance, the kerning and hinting is abysmal (although TBH I think hinting has more than had its day, and we're better off with blurry aa text, even on screens 1024 pixels wide). It is just one fugly font and the only reason I can see that anyone would like it is that their favourite hero endorsed it and the group-think around the hero's heroish aura is suppressing their mind's own ability to reason. I suppose having someone working on free software as a hero is better than worshipping some money grubbing greedy pig-fucker like the late Jobs, or Gates (however, being a brainless sheep is nothing to be proud of); although those money grubbing greedy pig-fucker's are often the inspiration in the first place. Not a fan of the ubuntu font either; which again seems to gain its popularity solely from celebrity endorsement.

(As an aside: people seem to be trained more and more these days not to think. Not to make a stir. To go along with the crowd. Even the wild frontier of the internets has been tamed, flame-wars seem to be banned from most forums and minority views are blatantly suppressed as a matter of open forum policing. As if suppressing and censoring dissenting views somehow makes them go away or is a valid long-term solution ...).

So ... although i've resolved not to go whine on other people's personal blogs (it's like going into someone's house and abusing them), i let my guard slip a bit this morning and posted a long dissenting (but mostly polite) view on a post about GNOME. I really wanted to sleep in but was awake before 6, so I wasn't in the best mood.

Anyway, it ties in with my last rant about 'tabbed desktop'; some of the suggestions are just stupid. Most of the suggestions are just a straight-up rip-off of an iphone, and then the group-think fanbois have the audacity to turn around and accuse people of stifling experimentation if they don't like it or don't think they'll work on a desktop computer? Nothing innovative or experimental about copying an apple interface (which seems to have been the sole `raison d'être' for GNOME ever since Apple's Mac OSX came out). The thought that one application can cater to every possible device is as inane as it is nonsensical. Even less so is the suggestion that one stern style guide can cope with every possible application and user class ...

I'm not sure why I even care; I haven't even used GNOME for years, I didn't even start using GNOME for years even when I was working on GNOME software (iirc until Novell bought ximian and I dropped RedHat 9 and amiwm for development ...); so I never really liked it. I thought gnome2 was bloated, slow, ugly and too limited so my less-than-gold standard of GNOME goodness pre-dates even that.

However, now I think about it, I know why I care: I know how this shit works. It becomes trendy and then everyone starts doing it and suddenly there's no-where else to go and you've got some fugly font installed by default. Or systemd, or networkmanager, or pulseaudio. Thankfully the font is easy to fix and pulse audio is easy to blacklist (although yum seems to ignore that sometimes), and networkmanager easy enough to bin as well. But it still makes it more difficult to get a working system set up every time and there's always a chance some snot like systemd (whose whole purpose seems to be to enable gui tools to poke into areas they shouldn't be involved with in the first place) which weaves itself so tightly into the system it simply cannot be removed.

(Aside again: It would help if systemd wasn't written by an author who clearly doesn't have a clue with system software, and adding such a complex and horribly nasty implementation behind it. And it would help if fedora didn't let pricks like this bully their way into such a core system service as init.)

The counter-argument is that it must be a good idea if it's popular and people are using it ... which if course is crap. We all saw how microsoft illegally forced its crapware onto everyone; popularity is not a technical metric, it's a political one. And people are easily manipulated, particularly if they're proud of their inability to think independently.

Although there's one thing that the GNOME developers and I agree on, even if they might not admit it: hacking is fun, and users suck. I wouldn't want to listen to whiney know-it-all's either. Then again, i'm not working on anything that the public relies on for their day to day computing experience ...

I just wish i'd had a good night's sleep now. Headed for an unpleasantly hot (39) and unpleasantly windy (30km/hr+) day today, i'm too tired to think about hacking, bored with tv, movies, and games, and my eyes are really tired from reading the screen too much this week; best hope is to water the garden a bit and maybe have a nap later followed by a couple of cold beers.

When I re-installed my workstation with a minimal fedora 15, Cantarell was about the only font that came along for the ride by the time I had XFCE up. Which made for a particularly unpleasant experience in Terminal and emacs - until I installed xterm and 'fixed'. Apart from being a disastrously ugly and unreadable font; it decided to use the proportional one as well; so it didn't even work.

Apart from many of the letter forms being simply ugly and out of balance, the kerning and hinting is abysmal (although TBH I think hinting has more than had its day, and we're better off with blurry aa text, even on screens 1024 pixels wide). It is just one fugly font and the only reason I can see that anyone would like it is that their favourite hero endorsed it and the group-think around the hero's heroish aura is suppressing their mind's own ability to reason. I suppose having someone working on free software as a hero is better than worshipping some money grubbing greedy pig-fucker like the late Jobs, or Gates (however, being a brainless sheep is nothing to be proud of); although those money grubbing greedy pig-fucker's are often the inspiration in the first place. Not a fan of the ubuntu font either; which again seems to gain its popularity solely from celebrity endorsement.

(As an aside: people seem to be trained more and more these days not to think. Not to make a stir. To go along with the crowd. Even the wild frontier of the internets has been tamed, flame-wars seem to be banned from most forums and minority views are blatantly suppressed as a matter of open forum policing. As if suppressing and censoring dissenting views somehow makes them go away or is a valid long-term solution ...).

So ... although i've resolved not to go whine on other people's personal blogs (it's like going into someone's house and abusing them), i let my guard slip a bit this morning and posted a long dissenting (but mostly polite) view on a post about GNOME. I really wanted to sleep in but was awake before 6, so I wasn't in the best mood.

Anyway, it ties in with my last rant about 'tabbed desktop'; some of the suggestions are just stupid. Most of the suggestions are just a straight-up rip-off of an iphone, and then the group-think fanbois have the audacity to turn around and accuse people of stifling experimentation if they don't like it or don't think they'll work on a desktop computer? Nothing innovative or experimental about copying an apple interface (which seems to have been the sole `raison d'être' for GNOME ever since Apple's Mac OSX came out). The thought that one application can cater to every possible device is as inane as it is nonsensical. Even less so is the suggestion that one stern style guide can cope with every possible application and user class ...

I'm not sure why I even care; I haven't even used GNOME for years, I didn't even start using GNOME for years even when I was working on GNOME software (iirc until Novell bought ximian and I dropped RedHat 9 and amiwm for development ...); so I never really liked it. I thought gnome2 was bloated, slow, ugly and too limited so my less-than-gold standard of GNOME goodness pre-dates even that.

However, now I think about it, I know why I care: I know how this shit works. It becomes trendy and then everyone starts doing it and suddenly there's no-where else to go and you've got some fugly font installed by default. Or systemd, or networkmanager, or pulseaudio. Thankfully the font is easy to fix and pulse audio is easy to blacklist (although yum seems to ignore that sometimes), and networkmanager easy enough to bin as well. But it still makes it more difficult to get a working system set up every time and there's always a chance some snot like systemd (whose whole purpose seems to be to enable gui tools to poke into areas they shouldn't be involved with in the first place) which weaves itself so tightly into the system it simply cannot be removed.

(Aside again: It would help if systemd wasn't written by an author who clearly doesn't have a clue with system software, and adding such a complex and horribly nasty implementation behind it. And it would help if fedora didn't let pricks like this bully their way into such a core system service as init.)

The counter-argument is that it must be a good idea if it's popular and people are using it ... which if course is crap. We all saw how microsoft illegally forced its crapware onto everyone; popularity is not a technical metric, it's a political one. And people are easily manipulated, particularly if they're proud of their inability to think independently.

Although there's one thing that the GNOME developers and I agree on, even if they might not admit it: hacking is fun, and users suck. I wouldn't want to listen to whiney know-it-all's either. Then again, i'm not working on anything that the public relies on for their day to day computing experience ...

I just wish i'd had a good night's sleep now. Headed for an unpleasantly hot (39) and unpleasantly windy (30km/hr+) day today, i'm too tired to think about hacking, bored with tv, movies, and games, and my eyes are really tired from reading the screen too much this week; best hope is to water the garden a bit and maybe have a nap later followed by a couple of cold beers.

Friday 24 February 2012

Scene Graphs

So, today is my weekly RDO and rather than spending it down the beach like I should have, I poked around ...

I was thinking of experimenting with a scene-graph model for GadgetZ (used in ReaderZ); not for any particularly practical reason, just to see how it would work. But I kind of got stuck on how to manage the layout mechanism and then lost interest ... still a bit burnt out from my hacking spree a few weeks ago, and I need a good sleep-in one day to catch up on sleep as well (they're still building next door).

I did however end up spending quite a bit of time playing with the JavaFX stuff. The 32-bit-only build wasn't too much hassle to get up and running on Fedora. It has some (pretty big) issues with focus, and the performance ranges from awesome to barely ok, but it is only a developer preview after-all.

Some observations:

Some issues though:

I'm not about to use it for anything; i'm not even going to bother to try for that matter, but I'll be keeping an eye on it. It seems to finally heading in the direction it initially promised after JavaFX 2 was announced: a high performance modern toolkit with a clean simple(ish) design, media support, and so on. Simple enough to use as a RAD tool, but complete enough to write real applications as well.

It seems as though Java8 will have something to look forward to for once ...

I'm also curious to see how the JVM based JavaScript engine will work; the JVM is fast, but I suspect the language will some bearing on achievable performance as well.

(i've nothing to comment on regards the hope to use it as a flash replacement for internet deployment, i'm just considering it as a desktop application development platform).

I was thinking of experimenting with a scene-graph model for GadgetZ (used in ReaderZ); not for any particularly practical reason, just to see how it would work. But I kind of got stuck on how to manage the layout mechanism and then lost interest ... still a bit burnt out from my hacking spree a few weeks ago, and I need a good sleep-in one day to catch up on sleep as well (they're still building next door).

I did however end up spending quite a bit of time playing with the JavaFX stuff. The 32-bit-only build wasn't too much hassle to get up and running on Fedora. It has some (pretty big) issues with focus, and the performance ranges from awesome to barely ok, but it is only a developer preview after-all.

Some observations:

- The rendering model looks very interesting. Obviously the aim is to provide a fully accelerated zoomable interface via the scene-graph. This is obviously absolutely the right thing to do.

- And for the most part this works well: it's very snappy when it's snappy.

- It tries to sync the rendering to the display and double-buffer rendering.

- The WebView thing looks pretty decent - it's a whole webkit binding, with dom access and the plan is to move to a JVM based javascript eventually.

Some issues though:

- No printing yet.

- Performance degrades fairly significantly when a lot of data is present. e.g. in the JavaFX Ensemble demo opening view - trying to scroll list of sample icons.

- The vsync doesn't work very well, lots of glitches (but i blame this mostly on both pc hardware and linux: even a commodore 64 had hardware well beyond a pc video card for smooth animation).

- Things like scrolling the WebView is flicker-free, but not as high a frame-rate as i'd expect for hardware rendering. But I don't know if it's delegating rendering to webkit as I do with PDFZ.

- The documentation needs work.

I'm not about to use it for anything; i'm not even going to bother to try for that matter, but I'll be keeping an eye on it. It seems to finally heading in the direction it initially promised after JavaFX 2 was announced: a high performance modern toolkit with a clean simple(ish) design, media support, and so on. Simple enough to use as a RAD tool, but complete enough to write real applications as well.

It seems as though Java8 will have something to look forward to for once ...

I'm also curious to see how the JVM based JavaScript engine will work; the JVM is fast, but I suspect the language will some bearing on achievable performance as well.

(i've nothing to comment on regards the hope to use it as a flash replacement for internet deployment, i'm just considering it as a desktop application development platform).

Flash: good bye and good riddance

Hmmm, so the news of the day is that Adobe are dropping flash support for GNU/linux. Oh praise the day it finally goes away for everyone ...

One can speculate on why, and why they're only `supporting Google':

Overall this isn't such a bad thing: even if it is just at the periphery, fewer projects will consider using this legacy technology. Already with Apple not allowing flash on their web-enabled mobile devices they have destined it to become history. Without its cross-platform ability it loses its main feature. It's just shit tech anyway; flash struggles doing much on my dual-core low-res thinkpad; it will be a while before ARM chips match that (ok, maybe 12 months in consumer devices), but the machine is fast enough to run other software quite well.

However, everyone seems to think HTML5 will be the answer. I think it will be a horrible mess harking back to the bad old days of netscape and microsoft extensions. Already most of the HTML5 demo's only work on one browser, or even a specific browser version. Most HTML5 video is in H.264 format: i.e. inaccessible from firefox by default. And you can't simply 'flash-block' a javascript animation: leading to an annoying browsing experience and a lot of wasted power animating web pages you're not even looking at.

I find it odd that HTML5 is being pushed so heavily: on the apple iphone you can't really do much more with it than simple games or animations. You can't even upload a file from a web-page (as far as i can tell), and device access is right out. To me the impression is that all the marketing hype is just FUD to get people from spending time on competing technology.

Some of the tech demo's are all very nice and all: but web software is still much shittier than a local application for interactivity and control, not to mention security. It's like using AMOS: it might be a lot easier to write a game, but you still end up with a shitty game. Down the track it's obviously aiming to be a 'be-all-end-all' RAD application development environment that makes writing simple applications easy; thing is, simple applications are already quite simple to write, and complex applications are always going to be complex to write. Adding the web tier adds a lot of complexity in itself.

HTML is already so complex that creating a fully compliant implementation is only possible by large multi-national corporations; and opera and mozilla. HTML5 only raises the bar higher and that's before you add all the proprietary extensions to the standard which are already proliferating.

Thus forget about distributing and sharing your changes with other users, or hiring a third party to make a customisation tailored to your business.

But it seems we're going back to the full-screen application model. Except now the applications are running in a crappy single-threaded virtual machine and being loaded remotely.

Hang on, I think thats 80's calling ... they're asking how that WIMP thing worked out ...

One can speculate on why, and why they're only `supporting Google':

- Adobe are in the creation-tool business, why are they wasting so much resources on maintaining a crapply plugin they give away for free? i.e. there are compelling business reasons to kill it entirely.

- More and more internet-enabled devices are unable or unwilling to support it; without the ubiquity of client access there is little reason to use it. This is also why silvelight will never be more than a niche. I don't see the point of JavaFX either unless it is available for free on all devices fast enough to run it (it going GPL shows clearly that Oracle know this too; they can't afford to maintain it for all platforms, and nobody would ever pay to license it - and even then in the end it'll probably just be a swing replacement for desktop development).

- Paying clients are willing to prop up the legacy technology for the time being, so Adobe don't want to kill the whole project off. Pissing off paying customers isn't a long-term bread-winner.

- Most of these paying clients do not care about GNU/Linux enough to keep it around there.

- Google are willing to fund a partial solution: as a marketing exercise in order to move people to their application platform (otherwise known as a 'browser'). It's just a cynical exercise to gain market share.

- It's basically 'the world' vs firefox. Proprietary companies want more control of the web pie, and will work together against firefox any way they can. e.g. see the HTML5 video drm proposal, the fuck-up with H.264 HTML5 vs OGG video, and so on.

Overall this isn't such a bad thing: even if it is just at the periphery, fewer projects will consider using this legacy technology. Already with Apple not allowing flash on their web-enabled mobile devices they have destined it to become history. Without its cross-platform ability it loses its main feature. It's just shit tech anyway; flash struggles doing much on my dual-core low-res thinkpad; it will be a while before ARM chips match that (ok, maybe 12 months in consumer devices), but the machine is fast enough to run other software quite well.

However, everyone seems to think HTML5 will be the answer. I think it will be a horrible mess harking back to the bad old days of netscape and microsoft extensions. Already most of the HTML5 demo's only work on one browser, or even a specific browser version. Most HTML5 video is in H.264 format: i.e. inaccessible from firefox by default. And you can't simply 'flash-block' a javascript animation: leading to an annoying browsing experience and a lot of wasted power animating web pages you're not even looking at.

I find it odd that HTML5 is being pushed so heavily: on the apple iphone you can't really do much more with it than simple games or animations. You can't even upload a file from a web-page (as far as i can tell), and device access is right out. To me the impression is that all the marketing hype is just FUD to get people from spending time on competing technology.

Some of the tech demo's are all very nice and all: but web software is still much shittier than a local application for interactivity and control, not to mention security. It's like using AMOS: it might be a lot easier to write a game, but you still end up with a shitty game. Down the track it's obviously aiming to be a 'be-all-end-all' RAD application development environment that makes writing simple applications easy; thing is, simple applications are already quite simple to write, and complex applications are always going to be complex to write. Adding the web tier adds a lot of complexity in itself.

Complexity is not your friend

The complexity of standard like HTML5 isn't there to benefit users or developers. It is added to benefit proprietary vendors and stifle competition by raising the barrier of entry to new competitors. And of course lawyers and other parasites end up getting a cut as well: the complexity is so great vendors must cross-license software in order to be able to get something working. That's even before you add issues like patents into the mix, which is just insanity.HTML is already so complex that creating a fully compliant implementation is only possible by large multi-national corporations; and opera and mozilla. HTML5 only raises the bar higher and that's before you add all the proprietary extensions to the standard which are already proliferating.

Goodbye Freedoms

HTML5 applications will also be much harder to modify and control; even if the source is available, it may be impossible to create a local copy that works without the infrastructure on the server-side used to support it.Thus forget about distributing and sharing your changes with other users, or hiring a third party to make a customisation tailored to your business.

Welcome to the Tabbed Desktop

So we're all moving toward a tabbed desktop. i.e. who needs multitasking when you can just swap applications at the press of a key ... Well, microsoft windows and the apple macintosh pretty much forced this from the start because their systems were so poor at multitasking; but real systems have been able to utilise multiple overlapping windows in a way which improves user productivity for decades.But it seems we're going back to the full-screen application model. Except now the applications are running in a crappy single-threaded virtual machine and being loaded remotely.

Hang on, I think thats 80's calling ... they're asking how that WIMP thing worked out ...

Wednesday 22 February 2012

Early morning drowsy mistakes

Oops, so yesterday morning I went to replace a dead HDD and re-install the OS on it. I thought I was being careful and unplugged the other, working HDD with a different OS on it so when I came to partition the disks I just deleted everything (the replacement disk had previously had CentOS on it).

Only i'd unplugged the wrong one, and ended up deleting the working OS and not the spare drive i'd just installed! Unfortunately that OS's installation partitioning tool writes every change immediately, so it was all gone even though I realised before i'd moved to the next screen. The installation disk for that OS is pretty crap too - it kept refusing to install on the disk I just formatted using the installer: it took a couple of resets before it was happy with it's own formatting ...

I tried recovering the partitions using testdisk, but either because of the filesystem types or the partition layout I had no luck with that. Just an hour down the drain waiting for it to scan the 1TB drive.

Fortunately I had made a full backup, so I lost nothing apart from half a day re-installing everything.

This time I installed Fedora 15 using the 'minimal install' option; and apart from some strange package selections (e.g. ssh client isn't installed, but server is), it actually took me a lot less time getting a comfortable and working system than it did when I just let it install GNOME and then had to remove all the crap (pussaudio, notworkmanager, and similar crud). What is also strange? It boots, logs in, and runs much faster than it did the day before. And now Thunar opens immediately the first time rather than pausing for a few seconds.

I had some weirdness with systemd and it not starting X after I installed it - which seems to be much more fucked and complex than I could possibly have imagined - but that just magically fixed itself after enough reboots. Also with thunar's auto-mounting stuff, which I think I fixed by installing gvfs, but it might've just been enough reboots too ...

Only i'd unplugged the wrong one, and ended up deleting the working OS and not the spare drive i'd just installed! Unfortunately that OS's installation partitioning tool writes every change immediately, so it was all gone even though I realised before i'd moved to the next screen. The installation disk for that OS is pretty crap too - it kept refusing to install on the disk I just formatted using the installer: it took a couple of resets before it was happy with it's own formatting ...

I tried recovering the partitions using testdisk, but either because of the filesystem types or the partition layout I had no luck with that. Just an hour down the drain waiting for it to scan the 1TB drive.

Fortunately I had made a full backup, so I lost nothing apart from half a day re-installing everything.

This time I installed Fedora 15 using the 'minimal install' option; and apart from some strange package selections (e.g. ssh client isn't installed, but server is), it actually took me a lot less time getting a comfortable and working system than it did when I just let it install GNOME and then had to remove all the crap (pussaudio, notworkmanager, and similar crud). What is also strange? It boots, logs in, and runs much faster than it did the day before. And now Thunar opens immediately the first time rather than pausing for a few seconds.

I had some weirdness with systemd and it not starting X after I installed it - which seems to be much more fucked and complex than I could possibly have imagined - but that just magically fixed itself after enough reboots. Also with thunar's auto-mounting stuff, which I think I fixed by installing gvfs, but it might've just been enough reboots too ...

Wednesday 15 February 2012

Dishwasher

So the dishwasher that came with the house finally died: I've hardly ever used it. It's been flakey for ages and I've mostly been waiting for it to die so I can replace it with one that cleans properly and uses less water so I can actually use the thing. Not that I would even bother with a dishwasher myself if it wasn't there, but well i'm getting lazy and the rest of the house seems to waste a lot of water doing dishes. It washes glasses well too.

I think it's only a solenoid gone; if I add water into the sump it pumps it down the drain so the pump works. Or maybe it's the logic board: it runs for half an hour with no water in it and sits there blinking at me.

Ahh decisions. I spent hours very late last night (for some reason I wasn't tired), trying to work out what to get. Money isn't an issue: but I don't like getting ripped off either. I've a Miele washing machine and plenty of people swear by those for dishwashers too but they do seem a bit pricey. But then there's Asko and Bosch too ...

I'm thinking the latest Asko at the moment, but I guess I should try and find somewhere that sells most of them so I can have a look. Unfortunately the big-box retailers are miles away and mostly in people-unfriendly strip-malls; or they're so fucked I'll never return to them (Radio Rentals, Main North Road: unfriendly shop design, with one-way doors and no staff, Spartan Electrical, Henly Beach Road: fuckwit salesman when I was after a stick blender I knew I could get $15 cheaper 5 minutes away by bicycle).

So it could be a long purchasing cycle: waiting until I could be bothered to make the long trek to a shop, hoping they have the ones i'm interested to look at, and putting up with being treated like shit by salesmen who will say anything to sell. Or getting fed up and going on-line again with a sight-unseen buy.

Hmm, maybe i'll poke around the back of the one I have ...

Update: Well the back-look was worth it. Apart from finding the remains of a mouse and nest - which explains at least one of the weird smells in the kitchen over the years - I gave up and shoved it all back together (in a rather slap-shod not-caring kind of way so now the door's a bit stiff). And now it's taking in water ok, will have to see how the full load of glasses and jars I wanted to clean goes. Either I jolted something in the process or it was just the act of re-seating the solenoid connector ...

Update: Ahh fuck it, it wasn't that: it just keeps puming for 10 minutes after the initial spray, and eventually times out. Water sensor must be out. Something for another day.

I think it's only a solenoid gone; if I add water into the sump it pumps it down the drain so the pump works. Or maybe it's the logic board: it runs for half an hour with no water in it and sits there blinking at me.

Ahh decisions. I spent hours very late last night (for some reason I wasn't tired), trying to work out what to get. Money isn't an issue: but I don't like getting ripped off either. I've a Miele washing machine and plenty of people swear by those for dishwashers too but they do seem a bit pricey. But then there's Asko and Bosch too ...

I'm thinking the latest Asko at the moment, but I guess I should try and find somewhere that sells most of them so I can have a look. Unfortunately the big-box retailers are miles away and mostly in people-unfriendly strip-malls; or they're so fucked I'll never return to them (Radio Rentals, Main North Road: unfriendly shop design, with one-way doors and no staff, Spartan Electrical, Henly Beach Road: fuckwit salesman when I was after a stick blender I knew I could get $15 cheaper 5 minutes away by bicycle).

So it could be a long purchasing cycle: waiting until I could be bothered to make the long trek to a shop, hoping they have the ones i'm interested to look at, and putting up with being treated like shit by salesmen who will say anything to sell. Or getting fed up and going on-line again with a sight-unseen buy.

Hmm, maybe i'll poke around the back of the one I have ...

Update: Well the back-look was worth it. Apart from finding the remains of a mouse and nest - which explains at least one of the weird smells in the kitchen over the years - I gave up and shoved it all back together (in a rather slap-shod not-caring kind of way so now the door's a bit stiff). And now it's taking in water ok, will have to see how the full load of glasses and jars I wanted to clean goes. Either I jolted something in the process or it was just the act of re-seating the solenoid connector ...

Update: Ahh fuck it, it wasn't that: it just keeps puming for 10 minutes after the initial spray, and eventually times out. Water sensor must be out. Something for another day.

Crappy Stories, Shitty Journalism, etc.

I read the tech news fairly regularly: mostly via boycottnovell.org since it's conveniently catalogued there (but not only for that reason). I occasionally drive-by comment. But today's headlines do seem a bit crappier than usual so I guess it's time for a bit of a rant. I think I finally got the hacker worked out of me for the time being too (and I need to get the house ready for a party), so that's always good for a rantfest.

I wont link to the articles, check the source if you care, which you probably don't.

I wont link to the articles, check the source if you care, which you probably don't.

- Is Windows 8 Metro failing even at Microsoft?

- SJVN is normally worth a read, it's all a bit fluffy but at least he's (usually) fairly on track. But today SJVN's lost the plot a bit, surely an ex-developer using an Apple PC must be the end for Microsoft?!

This one almost got me to sign up to zdnet, but thankfully I decided against that - the commenting readers there are pretty rabid and like them i'm only likely to comment when pissed off.

Although clearly the 'tablet shell on a desktop pc' idea is insanity, and so is a 'desktop shell on a phone', but the main thrust of the article seems to be dissing a photograph of some ex-microsoft lad sitting at a desk adorned with an Apple PC?

Firstly, who cares what some guy i've never heard of has behind him in a picture of him seated at a desk. Even if he was still at microsoft (although I'm lead believe their culture is kind of fucked up so wouldn't allow it), it's no big deal to track the competition: actually it's quite smart. And apart from that we all know that plenty of Free Software, `open source', and Linux developers use Apple PC's.

And people in big companies leave all the time, and for software that is more likely to happen at the end of a release. This is something Roy on boycottnovell.org seems to get worked up a bit too much over as well. - FSF Wants To Police JavaScript Use

- Well any article purportedly about the Free Software Foundation which mentioned `open source' in the first paragraph isn't even worth reading. So I didn't.

Can't have had much thought put into it. - iOS More Crashtastic Than Android (they've even got some stupid 'copyright' notice when trying to copy and paste the title, i decided to delete it)

- This seems to be a PR release from 'Crittercism' (whomever-the-fuck they are) with almost no journalistic input.

From a magazine calling itself 'Linux Insider', you'd think they'd point out one of the main points of Android's stability: it's kernel is Linux, which has had far more resources put into it than any kernel in history. No single corporate identity could ever compete with that.

Not to mention the application layer technology which is the real issue when talking about end-user application software:- Android's application layer is based on a mature platform proven to be stable, robust, and above all: crash-resistant. Java.

- iOS' is based on C. That should be `'nuff said', but not only that, some fucked up weird-arsed dialect of C, unfamiliar to most of the programming world. And we all know how robust and crash resistant C is. I really love C, and can write good C (IMNSHO), but crash-proof and robust it is clearly not.

I've told this anecdote before, but when I first started coding in Java again (after a year-long-ish stint pre-2000), I was astonished that my code didn't ever crash. Sure i could get piles of exceptions and things wouldn't function; but the application would keep plodding along tickety-boo. I had just had 3 years of c-hash, and I'd always been lead to believe that dot-net was basically just the same as Java.

And that crashed all the time. It's not as easy to crash as C which makes something of an art out of it, but it still crashes, and even what should be non-fatal errors can bring the application down with no indication of the cause.

But of course it isn't like Java at all: at the heart of it, dot-net is just a different and more dynamic linkage system for object files distributed in an intermediate format. So it still crashes like C, particularly when the platform was so immature and badly written (WPF, how I don't miss you). That's before you add the shitty memory management and crappy compiler to boot (oh man, and visual studio: i'm still astonished anybody could do more than barely-tolerate that piece of shit: some people actually seem to like it). - Android's application layer is based on a mature platform proven to be stable, robust, and above all: crash-resistant. Java.

- Is GNU/Linux just not cool anymore?

- This article isn't really too bad, for what it is (and it isn't much). For for the `Free Software Magazine', they could have at least grabbed the google trends on 'free software' and 'open source'.

Particularly considering the only non-commercial searches they performed were for 'linux mint', and 'gnu/linux', and two others weren't even related to software development in any way-shape-or-form and seem to have been added just to find an up- and a down-pointing curve to add visual aesthetics to the page.

Ok, so maybe the article wasn't so good after-all.

If they had have tried `free software', at least they'd have found a fairly flat trend. Unlike `open source' which only sees steady decline (despite it being term more often showing up in discussions about security matters based on it's other, more-descriptive meaning).

Tuesday 14 February 2012

Australian politics and the reporting thereof.

Plumbing the depths of irrelevance and insignificance.

At best, the reporters seem to think they're writing the society pages for Canberra socialites, or following TV celebrities (i.e. famous for no reason). At worst, it's just the local small-town gossip column.

And all the pollies only seem to be interested in making those pages as well.

Real shit happens every week that affects us all and the so-called reporters only want to talk about leadership squabbling (which seems to be made up for the most part) and Tony's dick stickers - when they're not talking about American politics that is. It's like they're writing/talking shit for their 'in-crowd' mates to chat about at their next cocktail party with the 'stars' they fawn over.

It doesn't help that Tones is a complete and utter nut-case, and Julia - just like Kev before her - only seems to want to lead from behind, making decisions and statements based on polls or some fukwit's column in the Murdoch press, or some local talkback radio station that most of the country can't even listen to ...

Apart from the 'insider' reporters and idiots like Barners, who really gives a fuck about this gossip crap?

And Crabb with her, `Cooking in the cabinet' (or whatever it's called) - a pretty ordinary looking 'celebrity host' cooking show: with active cabinet members presumably. Surely a low-point of both Australian politics and political reporting ...

(I usually just don't even bother watching the news, but I caught some earlier this evening about the never ending Rudd leadership ambitions crap and it ticked me off).

At best, the reporters seem to think they're writing the society pages for Canberra socialites, or following TV celebrities (i.e. famous for no reason). At worst, it's just the local small-town gossip column.

And all the pollies only seem to be interested in making those pages as well.

Real shit happens every week that affects us all and the so-called reporters only want to talk about leadership squabbling (which seems to be made up for the most part) and Tony's dick stickers - when they're not talking about American politics that is. It's like they're writing/talking shit for their 'in-crowd' mates to chat about at their next cocktail party with the 'stars' they fawn over.

It doesn't help that Tones is a complete and utter nut-case, and Julia - just like Kev before her - only seems to want to lead from behind, making decisions and statements based on polls or some fukwit's column in the Murdoch press, or some local talkback radio station that most of the country can't even listen to ...

Apart from the 'insider' reporters and idiots like Barners, who really gives a fuck about this gossip crap?

And Crabb with her, `Cooking in the cabinet' (or whatever it's called) - a pretty ordinary looking 'celebrity host' cooking show: with active cabinet members presumably. Surely a low-point of both Australian politics and political reporting ...

(I usually just don't even bother watching the news, but I caught some earlier this evening about the never ending Rudd leadership ambitions crap and it ticked me off).

Monday 13 February 2012

PDFZ searching

So after finding and fixing the bug in my outline binding - a very stupid paste-o - I added a table of content navigator to PDFReader, and then I had a look at search.

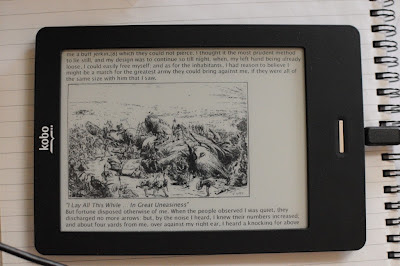

Which ... I managed to get working, at least as a start:

As can be seen, it's a little flaky - mupdf is adding spaces here and there in the recovered text, and i'm not sure i'm processing the EOL marker properly (and possibly I have a bug in the search trie code too). But as I said - it's a start.

I decided to use a Trie for the search (Aho-Corasick algorithm) - because I know it's an efficient algorithm, and because I know there was a good implementation in evolution. So I grabbed an old copy from the GPL sources and modified it to work on the mupdf fz_text_span code. Thanks Jeff ;-) Basically it's a state machine that can match multiple (possibly overlapping) words whilst only ever advancing the search stream one character at a time.

I tried to copy the emacs mode of searching to some extent:

I put some code in there to abort the search if the page is changed while it's still searching (because I hooked the search into the page loader/renderer), but on the documents i've tried it on it's been so fast I haven't been able to test it ...

This is one of the first times I used JNI to create complex Java objects from C - the array of results for a given page. It turns out it's fairly clean and simple to do.

I guess the next thing is to see if i can integrate the search functionality into ReaderZ. Time to write one of those horrible on-screen keyboards I guess ...

But for now ... the weather's way too nice to be inside, so I think it's off to the garden, beer in hand ...

Which ... I managed to get working, at least as a start:

As can be seen, it's a little flaky - mupdf is adding spaces here and there in the recovered text, and i'm not sure i'm processing the EOL marker properly (and possibly I have a bug in the search trie code too). But as I said - it's a start.

I decided to use a Trie for the search (Aho-Corasick algorithm) - because I know it's an efficient algorithm, and because I know there was a good implementation in evolution. So I grabbed an old copy from the GPL sources and modified it to work on the mupdf fz_text_span code. Thanks Jeff ;-) Basically it's a state machine that can match multiple (possibly overlapping) words whilst only ever advancing the search stream one character at a time.

I tried to copy the emacs mode of searching to some extent:

- / or ctrl-s starts a search

- The search updates immediately for the current page as characters are typed.

- The next search on the current page is highlighted when ctrl-s is pressed again.

- If there are no more results on the current page, the search starts scanning the document if ctrl-s is pressed again.

- ESC closes the command prompt.

I put some code in there to abort the search if the page is changed while it's still searching (because I hooked the search into the page loader/renderer), but on the documents i've tried it on it's been so fast I haven't been able to test it ...

This is one of the first times I used JNI to create complex Java objects from C - the array of results for a given page. It turns out it's fairly clean and simple to do.

I guess the next thing is to see if i can integrate the search functionality into ReaderZ. Time to write one of those horrible on-screen keyboards I guess ...

But for now ... the weather's way too nice to be inside, so I think it's off to the garden, beer in hand ...

Sunday 12 February 2012

PDFZ stuff

Amongst other hacking, I poked around with PDFZ a bit today:

Update: I got in touch with the mupdf devs, and I found out the next release is targeted by the end of the month; this will be a good opportunity to sync up with the api whilst the project is still fresh in my mind.

- Tried to bind the outline interfaces, but either i made a big mistake or something odd is going on: the pointer I allocate in the creation function wont resolve when I go to use it (or, it resolves to NULL). Spent a long time going nowhere on that one.

- Ported some of the ReaderZ code to swing and made a simple desktop PDF viewer. It renders on the fly as you pan around, and works quite well. I use another thread to load and render pages as in ReaderZ. I'm a bit dented that I couldn't get the outline stuff to work.

- Played with an alternative page rendering method: rendering directly to the array of a BufferedImage using GetPrimitiveArrayCritical(). Can't tell the difference on the desktop but it might be useful on the kobo, and could use less memory anyway.

- Noticed that mupdf is undergoing rapid development at the moment; i'm not trying to track it at all.

Update: I got in touch with the mupdf devs, and I found out the next release is targeted by the end of the month; this will be a good opportunity to sync up with the api whilst the project is still fresh in my mind.

Saturday 11 February 2012

Blah, ffmpeg changes and pain.

Sigh. I was looking at moving jjmpeg to ffmpeg 0.10, and along the way remove the use of deprecated APIs.

But there's a lot of pain involved here:

The last 3 not being in libav* was one of the reasons I tackled jjmpeg in the first place, it was a nice clean api using fairly consistent conventions. This made it quite simple to bind.

But there's a lot of pain involved here:

- There's quite a lot of deprecated stuff - Well what can you do eh?

- Custom streams are completely deprecated with no public API to replace them. That means AVIOStream will have to be thrown away and at best you're left with using pipes or sockets.

- Some arguments are now arrays. This is a lot more of a head-fuck than you'd imagine and will require messy and inefficient code to marshal values around.

- Some constructors now take in-out parameters as the object pointer. This requires a new constructor mechanism and frobbing around.

- Some arguments are also in-out parameters. Hello CORBA style holder arguments, or some other mechanism (the Holder type seems the easiest though) and a lot of fuffing about with jni callbacks to access them. Also, this cannot be inferred from the prototype alone, so I also need to expand the generator for that.

The last 3 not being in libav* was one of the reasons I tackled jjmpeg in the first place, it was a nice clean api using fairly consistent conventions. This made it quite simple to bind.

Friday 10 February 2012

Tuning ...

Had a poke at some performance tuning of jjmpeg.

I took 2 videos:

I then used JJMediaReader to scan the files and decode the video frames to their native format. I then took this frame and converted it to an RGB format using one of the tests below.

In all cases the GC load was zero for reading all frames (i.e. no per-frame objects were allocated). I'm using JDK 1.7. The machine is an intel i7x980. I'm using a fairly old build of ffmpeg (version 52 of libavcodec/libavformat).

The timing results (in seconds):

Using RGB and ByteBuffer's is a bit quicker than using RGBA. Apart from the differences down to libswscale there seems some overhead using an IntBuffer (derived from a ByteBuffer) to write to an Int array.

Using RGB is marginally quicker than using RGBA - although that's mostly down to libswscale, and for my build nothing is accelerated. When I move to ffmpeg 0.10 I will re-check the default formats i'm using are the quick(?) ones.

When using a direct buffer and then copying the whole array to a corresponding java array, the overhead is fairly small until the video size increases to HD resolutions. At 23% for 1440x1080xABGR, it is approaching a significant amount: but this application does nothing with the data. Any processing performed will reduce this quickly. At PAL resolution it's only about 5%.

Possibly of more interest is how the rest of the pipeline copes. Obviously with JOGL or JOCL the work is already done when using ByteBuffers, or ideally you'd process the YUV data yourself. I'm not sure about Java2D though, from a previous post there's a suggestion integer BufferedImage is the fastest.

However there are possibly cases where it would be beneficial and for Java image processing it is probably easier to use anyway: so I will add this new interface to jjmpeg after confirming it actually works.

I also found a bug in AVPlane where I wasn't setting the JNI-allocated ByteBuffer to native byte order. This made a big difference to the IntBuffer to int[] version (well 44% over no array copy in PAL), but wouldn't have been hit with my existing code.

I took 2 videos:

- PAL

- A PAL DVD, half hour show.

- 1080p

- A half hour show recorded directly with a DVB-T receiver. 1440x1080p, ~30fps, 10MB/s.

I then used JJMediaReader to scan the files and decode the video frames to their native format. I then took this frame and converted it to an RGB format using one of the tests below.

- ByteBuffer

- Code uses libswscale to write to an avcodec allocated frame in BGR24 format. The frame is not accessed from Java: this is the baseline performance of using a ByteBuffer, and it could be the end point if then passing the data to JOGL or JOCL.

- ByteBuffer to Array

- Perform the above, then use nio to copy the content to a Java byte array.

- IntBuffer

- Code uses libswscale to write to an avallocated frame in ABGR format. Similar to the first test, but a baseline for ABGR conversion.

- IntBuffer to Array

- Perform the above, then use nio to copy the content to a Java int array.

- int array

- Use JNI function GetPrimitiveArrayCritical, form a dummy image that points to it, and write to it directly using libswscale to ABGR format. This gives the Java end an integer array to work with directly.

In all cases the GC load was zero for reading all frames (i.e. no per-frame objects were allocated). I'm using JDK 1.7. The machine is an intel i7x980. I'm using a fairly old build of ffmpeg (version 52 of libavcodec/libavformat).

The timing results (in seconds):

Test \ Video PAL 1440x1080p

ByteBuffer 81.5 237

ByteBuffer to byte[] 86.0 279

IntBuffer 81.3 242

IntBuffer to int[] 86 297

int[] 81.9 242

Discussion

So ... using GetPrimitiveArrayCritical is the same speed as using a Direct ByteBuffer - but the data is faster to then access from Java as it can just be indexed.Using RGB and ByteBuffer's is a bit quicker than using RGBA. Apart from the differences down to libswscale there seems some overhead using an IntBuffer (derived from a ByteBuffer) to write to an Int array.

Using RGB is marginally quicker than using RGBA - although that's mostly down to libswscale, and for my build nothing is accelerated. When I move to ffmpeg 0.10 I will re-check the default formats i'm using are the quick(?) ones.

When using a direct buffer and then copying the whole array to a corresponding java array, the overhead is fairly small until the video size increases to HD resolutions. At 23% for 1440x1080xABGR, it is approaching a significant amount: but this application does nothing with the data. Any processing performed will reduce this quickly. At PAL resolution it's only about 5%.

Conclusions

For modern desktop hardware, it probably doesn't really matter: the machine is fast enough that a redundant copy isn't much overhead, even at HD resolution.Possibly of more interest is how the rest of the pipeline copes. Obviously with JOGL or JOCL the work is already done when using ByteBuffers, or ideally you'd process the YUV data yourself. I'm not sure about Java2D though, from a previous post there's a suggestion integer BufferedImage is the fastest.

However there are possibly cases where it would be beneficial and for Java image processing it is probably easier to use anyway: so I will add this new interface to jjmpeg after confirming it actually works.

I also found a bug in AVPlane where I wasn't setting the JNI-allocated ByteBuffer to native byte order. This made a big difference to the IntBuffer to int[] version (well 44% over no array copy in PAL), but wouldn't have been hit with my existing code.

Sleep n Whinge

ugh, what a crappy day. I hit the grog a bit hard last night (sister dropped by for a couple of hours on her way to the airport), and subsequently had very little sleep; and the neighbours decided today was a good day to re-start the work on the extensions next door. Had a nap about 5, at least until some dodgey scam out of India rang up about 7:30. Blah.

But I played a bit with some code during the day. I poked around with my slideshow creator, working on some more transition wipes - worked out a 'clock' transition which seemed to take much longer than it should have (for lack of inspiration I'm looking at the SMIL stuff for ideas). I was going to write a very simple front-end gui for it, but just didn't have the motivation for that today.

Then I got totally side-tracked with some other stuff: I noticed javafx builds are finally available for gnu/linux, looking at the swingx demo (there's a couple of things that look interesting), the image filters it uses. Mr Huxtable also has an interesting article about BufferedImage stuff (which i'm sure i've read before but must have forgotten about): and that got me thinking about changing the way jjmpeg's helpers work with images as it uses 3BYTE_BGR types and direct DataBuffer access.. And that got me thinking about JNIEnv.GetPrimitiveArrayCritical (to avoid 2 copies), and well by this time I was too hung-over and tired to do anything useful.

I also noticed the neighbours were building a really big verandah which will block most of the direct light into my bathroom, and they over-cut a bit of a tree that hangs over the boundary. And I got a letter from my insurance company whining about an over-charge they shouldn't have been making in the first place. All all that together with the severe lack of sleep, put me in a terrible mood and made me feel really rather miserable. And now it's 3am and they'll be at it at 7am again next to my bedroom window so tomorrow probably wont be much better ...

Update: Oh fun, 7:25am, shit radio station was bad enough, now it's with the jack-hammer.

But I played a bit with some code during the day. I poked around with my slideshow creator, working on some more transition wipes - worked out a 'clock' transition which seemed to take much longer than it should have (for lack of inspiration I'm looking at the SMIL stuff for ideas). I was going to write a very simple front-end gui for it, but just didn't have the motivation for that today.

Then I got totally side-tracked with some other stuff: I noticed javafx builds are finally available for gnu/linux, looking at the swingx demo (there's a couple of things that look interesting), the image filters it uses. Mr Huxtable also has an interesting article about BufferedImage stuff (which i'm sure i've read before but must have forgotten about): and that got me thinking about changing the way jjmpeg's helpers work with images as it uses 3BYTE_BGR types and direct DataBuffer access.. And that got me thinking about JNIEnv.GetPrimitiveArrayCritical (to avoid 2 copies), and well by this time I was too hung-over and tired to do anything useful.

I also noticed the neighbours were building a really big verandah which will block most of the direct light into my bathroom, and they over-cut a bit of a tree that hangs over the boundary. And I got a letter from my insurance company whining about an over-charge they shouldn't have been making in the first place. All all that together with the severe lack of sleep, put me in a terrible mood and made me feel really rather miserable. And now it's 3am and they'll be at it at 7am again next to my bedroom window so tomorrow probably wont be much better ...

Update: Oh fun, 7:25am, shit radio station was bad enough, now it's with the jack-hammer.

Wednesday 8 February 2012

VideoZ

I had a go at writing a simple 'media mixer' today. So far it's only video, but i'm already thinking about how to do the sound (hence some work on JOAL yesterday, I'm planning on using OpenAL-Soft to do the mixing, which gives me '3d sound' for free as well). Sound is a bit more difficult than video ...

As output it generates an encoded video file; using jjmpeg of course.

With a small amount of code i've got a slideshow generator, together with affine transforms, opacity, and video or still pictures. I'm just using Java2D for all the rendering: so the compositor is fairly slow, but it's workable.

But, the biggest part of any real application such as this is the user interface for setting up the animation parameters ...

As output it generates an encoded video file; using jjmpeg of course.

With a small amount of code i've got a slideshow generator, together with affine transforms, opacity, and video or still pictures. I'm just using Java2D for all the rendering: so the compositor is fairly slow, but it's workable.

But, the biggest part of any real application such as this is the user interface for setting up the animation parameters ...

Tuesday 7 February 2012

Paged layout, busy dot.

After poking around at jjmpeg a bit this morning, I played a bit more with ReaderZ. First I added an animated 'busy' icon for when the reader is busy, and moved the epub html loader to another thread so it animates. It's ugly, but it works. I simplified the use of the event manager as well.

Then I redesigned the BlockLayout code in CSZ so that I could sub-class it to create a paged media layout. It isn't 'conformant' by any stretch, and has a bug with tall images, but at least it forces lines to align to a new page once they've overflowed the viewport.

During this I realised I probably wont be able to get away with a single-pass for the layout. e.g. if you have an auto-sized box, it's size depends on things like the size of floats and the lineboxes inside of it. But you have to lay these all out before you can determine what it is, and then must lay them out again afterwards once you've determined the real size you're working with (also required for things like text-align). I might have to lay out individual words instead so then the second layout can be fast as well as letting the layout be handled separately from the text object.

Then I redesigned the BlockLayout code in CSZ so that I could sub-class it to create a paged media layout. It isn't 'conformant' by any stretch, and has a bug with tall images, but at least it forces lines to align to a new page once they've overflowed the viewport.

During this I realised I probably wont be able to get away with a single-pass for the layout. e.g. if you have an auto-sized box, it's size depends on things like the size of floats and the lineboxes inside of it. But you have to lay these all out before you can determine what it is, and then must lay them out again afterwards once you've determined the real size you're working with (also required for things like text-align). I might have to lay out individual words instead so then the second layout can be fast as well as letting the layout be handled separately from the text object.

Monday 6 February 2012

jjmpeg transcoding

Well I had a go at transcoding using jjmpeg. I added the binding required to get it to work and added a new JJMediaWriter class to handle some of the details.

It doesn't work very well - many formats just crash. But at least avi with a few formats works. I presume i have some problems with the buffer sizes or some-such.

Update: A misunderstanding of the JNI api means I was getting a ByteBuffer pointing to 0, rather than a null ByteBuffer. I've fixed that up and now the transcode demo works a bit better. I'm still not flushing the decoders on close, so it isn't complete yet.

Sources:

It doesn't work very well - many formats just crash. But at least avi with a few formats works. I presume i have some problems with the buffer sizes or some-such.

Update: A misunderstanding of the JNI api means I was getting a ByteBuffer pointing to 0, rather than a null ByteBuffer. I've fixed that up and now the transcode demo works a bit better. I'm still not flushing the decoders on close, so it isn't complete yet.

Sources:

Saturday 4 February 2012

e-reader, epub

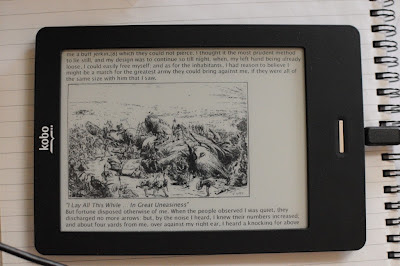

Had a mini hack-fest today, and whipped up an e-pub backend for ReaderZ based on CSZ.

I added very basic img tag support as well, as is obvious.

Apart from the code to parse the content.opf file from the .epub archive which was fairly small, I spent the most time trying to work out a URL handler for a made-up 'epub:' protocol. I copied the way the jar: protocol handler distinguises between the base archive and the filename using "!/" - this is so that the normal url resolution mechanism work. But I also wanted to resolve by the manifest ID and I use the url fragment for that (although in hindsight I probably don't need it). But anyway in the end it wasn't much code, and having it there made everything 'just work', which was nice.

I also had to deal with all the crap XML brings along: i.e. dtd resolution.

The actual viewer is a bit unwieldy as it works as a set of html pages. So you need to pan around to read each 'page' (i.e. chapter, or whole book), and changing pages flips between the items in the spine (i.e. chapters or whole book). To do better than that I really need a paginating layout engine: which is something for later.

I have no svg support not surprisingly, so title pages which are pure svg come up a re-assuring blank.

Still a bit slow opening new chapters, but what can you do eh?

It's all been checked in to ReaderZ and CSZ.

I added very basic img tag support as well, as is obvious.

Apart from the code to parse the content.opf file from the .epub archive which was fairly small, I spent the most time trying to work out a URL handler for a made-up 'epub:' protocol. I copied the way the jar: protocol handler distinguises between the base archive and the filename using "!/" - this is so that the normal url resolution mechanism work. But I also wanted to resolve by the manifest ID and I use the url fragment for that (although in hindsight I probably don't need it). But anyway in the end it wasn't much code, and having it there made everything 'just work', which was nice.

I also had to deal with all the crap XML brings along: i.e. dtd resolution.

The actual viewer is a bit unwieldy as it works as a set of html pages. So you need to pan around to read each 'page' (i.e. chapter, or whole book), and changing pages flips between the items in the spine (i.e. chapters or whole book). To do better than that I really need a paginating layout engine: which is something for later.

I have no svg support not surprisingly, so title pages which are pure svg come up a re-assuring blank.

Still a bit slow opening new chapters, but what can you do eh?

It's all been checked in to ReaderZ and CSZ.

Thursday 2 February 2012

floats n stuff

I made some more progress on CSZ. The latest thing I have sort-of working are floats.

I think i'm interpreting the bits i've implemented correctly: floats are quite limited so the layout logic isn't terribly complex. I still have no borders or padding (and I removed the fudge factor I had in before) so it looks a bit cramped.

It's still sad just how much crap you need to get to even this point ...

I just got a call from work and they want me back in a couple of weeks, so I might turn down the effort a bit so I can psych myself up for that. Maybe i'll finally use that kobo as a reader of books too ...

I think i'm interpreting the bits i've implemented correctly: floats are quite limited so the layout logic isn't terribly complex. I still have no borders or padding (and I removed the fudge factor I had in before) so it looks a bit cramped.

It's still sad just how much crap you need to get to even this point ...

I just got a call from work and they want me back in a couple of weeks, so I might turn down the effort a bit so I can psych myself up for that. Maybe i'll finally use that kobo as a reader of books too ...

Subscribe to:

Posts (Atom)